Google Reports First Instance of AI-Powered Zero-Day Exploit Targeting 2FA Systems

According to Google's Threat Intelligence Group, there is "high confidence" that cybercriminals employed an artificial intelligence model to identify and exploit a security flaw in a widely-used system administration tool.

According to Google's Threat Intelligence Group, they have uncovered what appears to be the inaugural instance of cybercriminals leveraging artificial intelligence technology to create a zero-day exploit.

In a blog post published on Tuesday, the team revealed that they had "observed prominent cyber crime threat actors partnering to plan a mass vulnerability exploitation operation," utilizing a zero-day security flaw that enabled them to circumvent two-factor authentication in an unidentified "popular open-source, web-based system administration tool."

While the exploit necessitated legitimate user credentials initially, it successfully bypassed the secondary authentication factor, a security measure frequently employed to protect crypto accounts and digital wallets.

Artificial intelligence technology has seen growing adoption in both the cybersecurity sector and among cryptocurrency hackers looking to execute exploits or perpetrate scams. Last month, AI company Anthropic reported that its latest AI model, Claude Mythos, discovered thousands of software security flaws throughout major systems.

According to Google, they had "high confidence that the actor likely leveraged an AI model to support the discovery and weaponization of this vulnerability," given that the exploit script contained a hallucination and exhibited a format "highly characteristic" of the training data used in AI models.

While the report did not identify the specific threat actor, Google noted that both China and North Korea have "demonstrated significant interest in capitalizing on AI for vulnerability discovery."

LLMs excel at high-level flaw identification

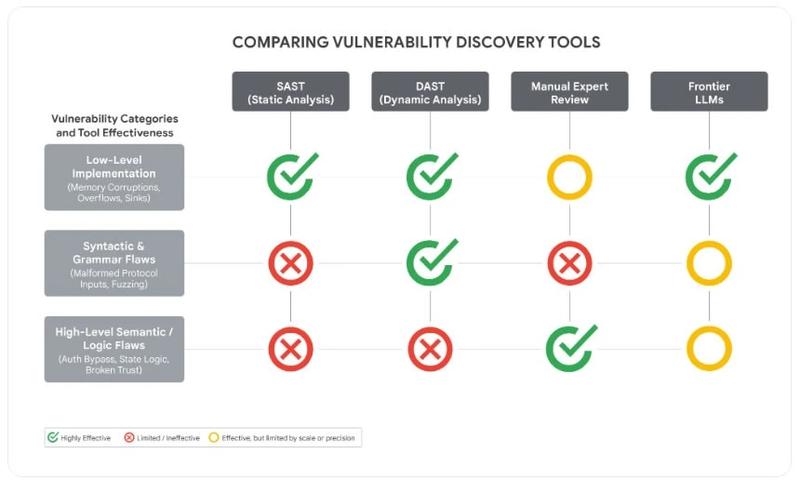

According to Google, the security vulnerability didn't originate from "common implementation errors" such as memory corruption, but rather from a "high-level semantic logic flaw" in which the developer embedded a trust assumption directly into the code.

This suggests the attackers utilized a frontier large language model (LLM), given that these models demonstrate excellence in detecting high-level security flaws and "hardcoded static anomalies," according to Google's analysis.

Multiple malware variants, including PROMPTFLUX, HONESTCUE and CANFAIL, also employ LLMs for evading detection systems, creating decoy or placeholder code to obscure malicious logic, Google reported.

Industrialized LLM abuse is increasing

The abuse of LLM access is undergoing industrialization as cybercriminals have constructed automated pipelines designed to rotate through premium AI accounts, aggregate API keys, and circumvent safety guardrails on a massive scale — essentially operating adversarial campaigns funded by the exploitation of trial accounts.

"By leveraging anti-detect browsers and account-pooling services, actors are attempting to maintain high-volume, anonymized access to premium LLM tiers, effectively industrializing their adversarial workflows."

Google's conclusion emphasized that as organizations persist in incorporating LLMs into their production environments, the AI software ecosystem has become a principal target for malicious exploitation.

The company observed that adversaries are increasingly focusing on the integrated components that provide AI systems with their functionality, including autonomous skills and "third-party data connectors," though threat actors have not yet succeeded in achieving breakthrough capabilities that would allow them to circumvent the core security logic of frontier models, according to their statement.