Anthropic Takes Legal Action Against Trump Administration Following Pentagon's Security Designation

In an unprecedented move, the Pentagon has designated Anthropic as a military supply chain risk, marking the first time a U.S.-based company has received such a classification. The AI firm denounces the action as both "unprecedented and unlawful."

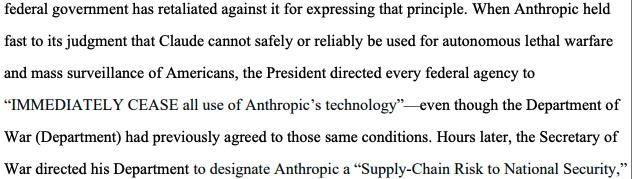

The artificial intelligence company behind Claude has initiated legal proceedings against the Trump administration, alleging what it characterizes as an "unlawful campaign of retaliation" following its refusal to grant the military unlimited access to its AI technology.

On Monday, Anthropic filed a lawsuit in California federal court targeting several government agencies and officials, requesting judicial intervention to nullify the Department of Defense's classification of the firm as a "supply chain risk."

The legal challenge additionally aims to block US President Donald Trump's order directing federal workers to discontinue their use of Claude. In parallel, Anthropic has initiated separate litigation in a Washington, D.C., appeals court contesting the Defense Department's ruling.

"These actions are unprecedented and unlawful. The Constitution does not allow the government to wield its enormous power to punish a company for its protected speech."

Anthropic

Claude "never tested" for uses wanted by Pentagon

In the previous month, Defense Secretary Pete Hegseth, identified as a defendant in the legal action, initiated proceedings to designate Anthropic as a supply chain risk, with the classification becoming official on March 3, prohibiting any individual or organization conducting business with the military from engaging with Anthropic.

This marks an unprecedented instance of a domestic American corporation receiving a supply chain risk designation, a classification historically applied exclusively to entities connected to foreign adversaries.

Both the US government and the Pentagon have utilized Anthropic's services since 2024, with the company's AI technology becoming the first artificial intelligence system authorized for deployment in classified operations.

According to Anthropic's claims, Hegseth's designation followed his insistence that the company "discard its usage restrictions altogether," while Anthropic held firm on its position that its technology should not be employed for lethal autonomous warfare or widespread surveillance of American citizens, restrictions that had been consistently included in its government agreements.

"Anthropic has never tested Claude for those uses. Anthropic currently does not have confidence, for example, that Claude would function reliably or safely if used to support lethal autonomous warfare."

Anthropic lawsuit

The legal filing from Anthropic also identifies the US Treasury and its secretary, Scott Bessent, the State Department, and Secretary of State Marco Rubio, in addition to 17 other governmental departments and officials.

On Monday, a coalition comprising more than 30 artificial intelligence engineers and scientists representing OpenAI and Google, which includes Google's chief scientist, Jeff Dean, submitted an amicus brief backing Anthropic's position.

"If allowed to proceed, this effort to punish one of the leading U.S. AI companies will undoubtedly have consequences for the United States' industrial and scientific competitiveness in the field of artificial intelligence and beyond."

AI engineers and scientists supporting Anthropic