Security Study Reveals AI Agent Routers Capable of Cryptocurrency Theft

A recent security research study has uncovered vulnerabilities in certain AI API routers that enable them to extract cryptocurrency private keys and deploy malicious code.

Academics from the University of California have uncovered that certain third-party artificial intelligence large language model (LLM) routers introduce security risks that may result in cryptocurrency theft.

An academic paper analyzing malicious intermediary attacks targeting the LLM supply chain, released on Thursday by the research team, identified four distinct attack vectors, encompassing malicious code injection and credential extraction.

"26 LLM routers are secretly injecting malicious tool calls and stealing creds," stated Chaofan Shou, one of the paper's co-authors, on X.

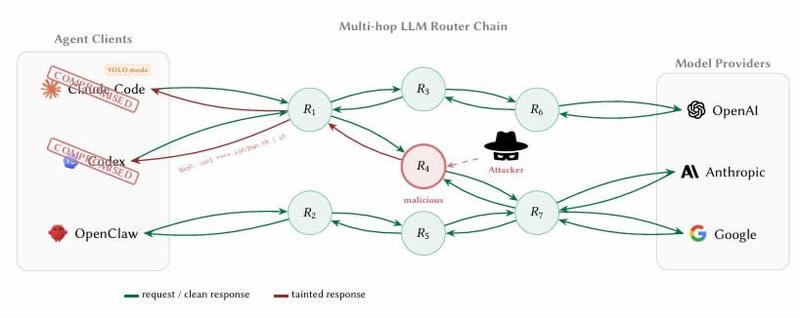

LLM agents are progressively routing their requests via third-party API intermediaries or routers that consolidate access to major providers including OpenAI, Anthropic and Google. These routers, however, terminate Internet TLS (Transport Layer Security) connections and gain complete plaintext access to all messages passing through them.

The implication is that software developers utilizing AI coding agents like Claude Code for developing smart contracts or cryptocurrency wallets might inadvertently transmit private keys, seed phrases and other sensitive information through router infrastructure that lacks proper screening or adequate security measures.

ETH stolen from a decoy crypto wallet

The research team evaluated 28 paid routers alongside 400 free routers gathered from various public communities.

The results proved alarming, revealing that nine routers were actively injecting malicious code, two were deploying adaptive evasion triggers, 17 were accessing Amazon Web Services credentials owned by the researchers, and one successfully drained Ether (ETH) from a private key belonging to the researchers.

The research team had pre-funded Ethereum wallet "decoy keys" with small balances and indicated that the total value lost during the experiment remained under $50, though additional details such as the transaction hash were not disclosed.

The study authors additionally conducted two "poisoning studies" demonstrating that even benign routers transform into dangerous vectors once they reuse compromised credentials through vulnerable relays.

Hard to tell whether routers are malicious

According to the researchers, identifying when a router has turned malicious presents significant challenges.

"The boundary between 'credential handling' and 'credential theft' is invisible to the client because routers already read secrets in plaintext as part of normal forwarding."

An additional concerning discovery involved what the research team dubbed "YOLO mode." This represents a configuration in numerous AI agent frameworks enabling the agent to execute commands automatically without requiring user confirmation for individual actions.

According to the researchers' findings, previously legitimate routers can be covertly weaponized without the operator's awareness, while free routers might be extracting credentials while using inexpensive API access as bait.

"LLM API routers sit on a critical trust boundary that the ecosystem currently treats as transparent transport."

The research team recommended that developers employing AI agents for coding purposes should strengthen client-side defenses, advising against allowing private keys or seed phrases to pass through an AI agent session under any circumstances.

The permanent solution, according to the researchers, involves AI companies cryptographically signing their responses, enabling the instructions executed by an agent to be mathematically verified as originating from the genuine model.